Uncategorized

Moto 360 Review! 2 Months with an Android Wear Smartwatch

Inbox by Gmail First Impressions & Invitations!

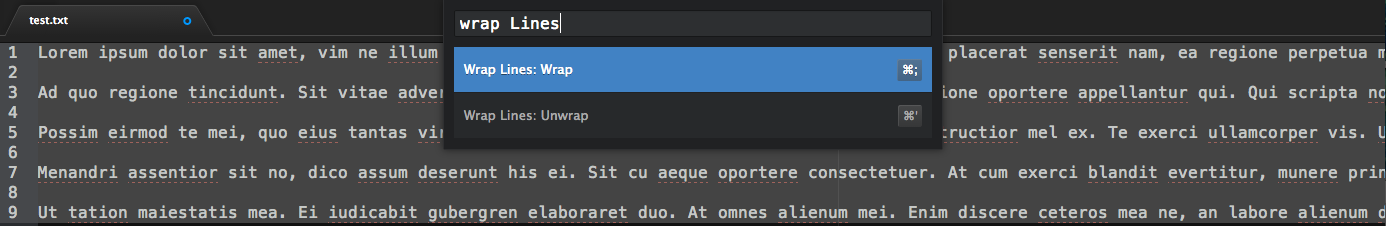

Atom package for wrapping lines

Even though I have always seemed to fall back to Vim in a terminal for my coding, I have been using Atom (with SSHFS) for some time now and for reasons I don’t quite understand yet, I’ve not really felt the urge to stop. My initial impressions were that Atom is a Sublime knock-off with some more flexibility, so I expected after a short time of playing with my invite, to go back to my tried and true Vim since that’s what I did with Sublime. But here I am, and I’m more convinced to stick with it some more now that it has been open sourced. The ‘gq’ wrap lines feature was one I sorely missed, so I thought I’d try to script it.

I was surprised at how easy and quick it was to code a package for it, and to subsequently make available to everyone else through their package management system (the image above takes you to the package). On a slightly related note, my friend Rob pointed me to this interesting Vim modernization project.

Lamport Stresses Thinking Before Coding

More specifically, he suggests some of the ‘thought’ before coding should be formalized as a specification (like a blueprint) written in the language of mathematics. The TLA specification language uses satisfiability of boolean formulas as a mathematical way to express initial states and the possibilities for a state to transition to other states. The framework provides the programmer a way to (1) think in depth about the possible states before coding and running test cases, (2) better document what an implemented piece of code does making it more convenient for updates later, and (3) automatically find bugs from logical inconsistencies.

This video provides more motivation and examples for what I said above, including a couple of anecdotes that mentioning the use of TLA by Amazon and Microsoft. Overall, I think he made a good argument that putting more effort in the specification can lead to better design decisions down the line.

A couple of notes:

(1) This approach is mostly about ‘functional’ specification, so it provides little assistance in implementing such a specification. In this way, it is largely independent of programming language choice/design, compilation, and optimization in practice. Many specific algorithms could satisfy a functional specification. In his talk, I got the impression that these other issues were trivial in comparison to specification, which I don’t think is often the case. However, I certainly agree that more thought should be spent at the specification stage, if possible.

(2) Large-scale adoption of this formalism seems like it would be difficult. There is the obvious overhead in teaching people to understand and implement this formalism in their work. Even if knowledge of TLA was part of a programmer’s toolkit, this type of formalism may be far more beneficial for specific, more ‘predictable’ problems. By predictable, I mean that a programmer (or computer) can consider the possibilities of desirable and undesirable states a priori. For example, the task “write a program to produce a pleasing sound” is not only a larger/more general problem, but it also very difficult to specify it exactly. This is an extreme example, but less extreme versions of it are a very common and practical form of programming (e.g. programs that are highly dependent on human satisfaction during use). For these applications, past intuition, seeing how you, a test group of people, and even your customers respond to your program may provide the most valuable information for improvement.

Leslie Lamport is a computer scientist working at Microsoft Research. He recently won the Turing Award (one of the highest honors in computer science), and ever since I learned about Lamport clocks, he has been one of my favorite CS academics.

State of My Gadget Union: HP Chromebook 11

I have made two new Googly purchases recently. The HP Chromebook 11 and the Nexus 5.

Nexus 5 thoughts soon, but after a few weeks with this Chromebook, I have found that this has effectively replaced my tablet and a surprisingly, many things I do on my Macbook Pro Retina 15”. One of the reasons I wanted to try it out was that I found I often wanted to chat or write a quick email or do some basic keyboard-level content input with the tablet, but didn’t want to pull out a full-fledged computer. This got me interested in the announcements for the Chromebook and the new Microsoft Surface (or potentially a new Macbook Air 11”? That would be very tempting). So for me, while the Chromebook is more of a notebook than it is a tablet, I find that I use it more like a tablet: to quickly look up and input content.

Pros in my experience:

- Surprisingly, this is currently my most used device. My Macbook Pro Retina 15” is now used almost solely for larger, visually-intensive projects like creating a presentation or poster. And my tablets have become fancy remote controls lately.

- Super inexpensive

- Incredibly handy: can fit a tiny netbook/tablet bag

- Great for content input vs. a tablet

- Micro USB charging very handy: don’t have to carry two chargers

- Display is really good (this is what got me interested in the first place): very nice contrast and viewing angles (even when compared to the Macbook Air)

- Font size scaling in Chrome is nice: iPad + keyboard, for example, doesn’t scale fonts well, so it’s hard to use when placed at a distance: i.e. towards your lap or knee vs. held up near your face. Chrome on a Macbook Air can scale fonts, but that’ll run you about $1,000: and you’ll have a less appealing display at the moment.

- Very light: can easily hold it in one hand

- Nice to use for coding via SSH

Cons:

- Can be laggy/frame-droppy at times: especially when compared to a Chromebook Pixel, Mac or Google/Apple tablet experience. For a similar price, I believe the Asus and Samsung models may be faster, but the displays aren’t as appealing.

- Native apps like Hangouts or Keep don’t appear to support font size adjustment. I just use the browser instead.

- If you were to use this for presentations, I’m not sure how you would give one.

- Low battery life.

For the price, those are the only cons I can think of after weeks of use. This Chromebook may not have been targeted to a geek like me who already has much more powerful, beautiful machines, but it certainly has won me over. Things I’d like to see in the next iteration of entry-level Chromebooks by Google:

- Smoother. Please make this a smoother, more responsive laptop! Maybe the Asus or Samsung models are smoother, but I really liked the display on this one. I think many would pay the premium for this. One of the things I like about Apple products is that no matter what ‘line’ you get from them, you typically don’t experience any major lag in performance for basic tasks, and I think this is a good standard for Google devices.

- Ramp up native Chrome apps to be more like a nice tablet experience. For example, make a native Youtube or Gmail App that immerses you in.

- Maybe a touchscreen like a lot of Windows models are going with (or the new Acer C720P!)?

Grabbing individual colors from color maps in matplotlib

Want an individual color from a color map in matplotlib? You would think this would be easy (and it is as you will see below), but as I traversed the documentation, I could not find for the life of me how to do this. After looking at various matplotlib functions, Rob and I finally found that it’s really simple (and apparently undocumented as far as we can tell). Just choose your colormap (e.g. ‘jet’) and call it like a function with a value between 0 and 1: e.g. pylab.cm.jet(.5). This will return you the rgba 4-tuple that you can use for whatever.

R and Python (RPy2): Rank-revealing QR Decomposition

I used to use R all the time until I got into Python, NumPy, and SciPy.

In general, however, R has much more sophisticated packages for statistics (I don’t think there’s too much argument here). Unfortunately, R, in my opinion, is a cumbersome language for string manipulations or more general programming (though it is fairly capable).

You can get the best of both worlds with RPy.

I recently wanted to calculate the rank of a matrix via a QR decomposition as an alternative to computing the SVD. I haven’t yet seen code to do this in numpy. R, on the other hand, computes this automatically in its ‘qr’ function. To get this running in my numpy/scipy code, I didn’t even have to worry much about matrix format conversions between the languages. After a couple of ‘imports’ and within 5 minutes I calculated the rank in Python via the R code:

rqr = robjects.r[‘qr’]

print rqr(R)[1][0]

Speed up your Python: Unladen vs. Shedskin vs. PyPy vs. Cython vs. C

Lately I’ve found that prototyping code in a higher-level language like Python is more enjoyable, readable, and time-efficient than directly coding in C (against my usual instinct when coding something that I think needs to go fast). Mostly this is because of the simplicity in syntax of the higher level language as well as the fact that I’m not caught up in mundane aspects of making my code more optimized/efficient. That being said, it is still desirable to make portions of the code run efficiently and so creating a C/ctypes module is called for.

I recently created an application (I won’t go into the details now) that had a portion of it that could be significantly sped up if compiled as a C module. This spawned a whole exploration into speeding up my Python code (ideally while making minimal modifications to it).

I created a C module directly, used the Shedskin compiler to compile my Python code into C++, and tried the JIT solutions PyPy and Unladen Swallow. The time results for running the first few iteration for this application were surprising to me:

cpython: 59.174s

shedskin: 1m18.428s

c-stl: 12.515s

pypy: 10.316s

unladen: 44.050s

cython: 39.824

While this is not an exhaustive test, PyPy consistently beats a handwritten module using C++ and STL! Moreover, PyPy required little modification to my source (itertools had some issues) [1]. I’m surprised that Uladen and Shedskin took so long (all code was compiled at O3 optimization and run on multiple systems to make sure the performance numbers were relatively consistent).

Apparently out-of-the-box solutions these days can offer nearly a 10x improvement over default Python for a particular app. and I wonder what aspects of PyPy’s system accounts for this large performance improvement (their JIT implementation?).

[1] Uladen required no modifications to my program to run properly and Shedskin required quite a few to get going. Of course, creating a C-based version took a moment :-).

Update 1: Thanks for the comments below. I added Cython, re-ran the analysis, and emailed off the source to those who were interested.

Update 2: The main meat of the code is a nested for loop that does string slicing and comparisons and it turns out that it’s in the slicing and comparisons that was the bottleneck for Shedskin. The new numbers are below with a faster matching function for all tests (note that this kind of addition requires call ‘code twiddling’, where we find ourselves fiddling with a very straightforward, readable set of statements to gain efficiency).

cpython: 59.593sshedskin0.6: 8.602sshedskin0.7: 3.332sc-stl: 1.423spypy: 8.947sunladen: 29.163scython: 26.486s (3.5s after adding a few types)

So C comes out the winner here, but Shedskin and Cython are quite competitive. PyPy’s JIT performance is impressive and I’ve been scrolling through some of the blog entries on their website to learn more about why this could be. Thanks to Mark (Shedskin) and Maciej (PyPy) for their comments in general and and to Mark for profling the various Shedskin versions himself and providing a matching function. It would be interesting to see if the developers of Unladen and Cython have some suggestions for improvement.

I also think it’s important not to look at this comparison as a ‘bake-off’ to see which one is better. PyPy is doing some very different things than Shedskin, for example. Which one you use at this point will likely be highly dependent on the application and your urge to create more optimized code. I think in general hand-writing C code and code-twiddling it will almost always get faster results, but this comes at the cost of time and headache. In the meanwhile, the folks behind these tools are making it more feasible to take our Python code and optimize it right out of the box.

Update 3: I also added (per request below :-)) just a few basic ‘cdef’s and types to my Cython version. It does a lot better, getting about 3.5s on average per run!

Mendeley: Try It

Mendeley is pretty cool.

I’ve been using it for a week or so and it’s definitely integrated itself well without being obtrusive.

Some things I really like:

- Cross-platform: OS X, Linux, Windows

- Drag-and-drop your PDFs and it figures out the metadata as best as it can. If it gets something slightly off, you press a button and a Google scholar check usually fixes it all up

- PDF viewing is quite speedy and natural for me with their tabbed interface

- Notes, highlights, etc: syncs with the cloud and can be integrated with other users that you share your documents with

- I find storing associated URLs to be a very handy feature (links to the web site, supplementary info, etc.)

- Cleanly store your PDFs in a directory of your choice (e.g. I use Dropbox to view them on my iPad)

Requests (maybe I can request some of these?):

- iPad app (supposedly on the way)*

- Sometimes highlighting/selecting text isn’t as accurate and intuitive as it is in OS X’s Preview application, which does selection brilliantly

- Parse PDFs for their citations and do *cool* things with this. What can you tell me about papers I may be interested in by looking at the citation network of my collection?

- If Mendeley is like iTunes for music, it would be nice to have a slick storefront to browse related papers of interest and import.